Safety with Agency

Human-Centered Safety Filter

with Application to AI-Assisted Motorsports

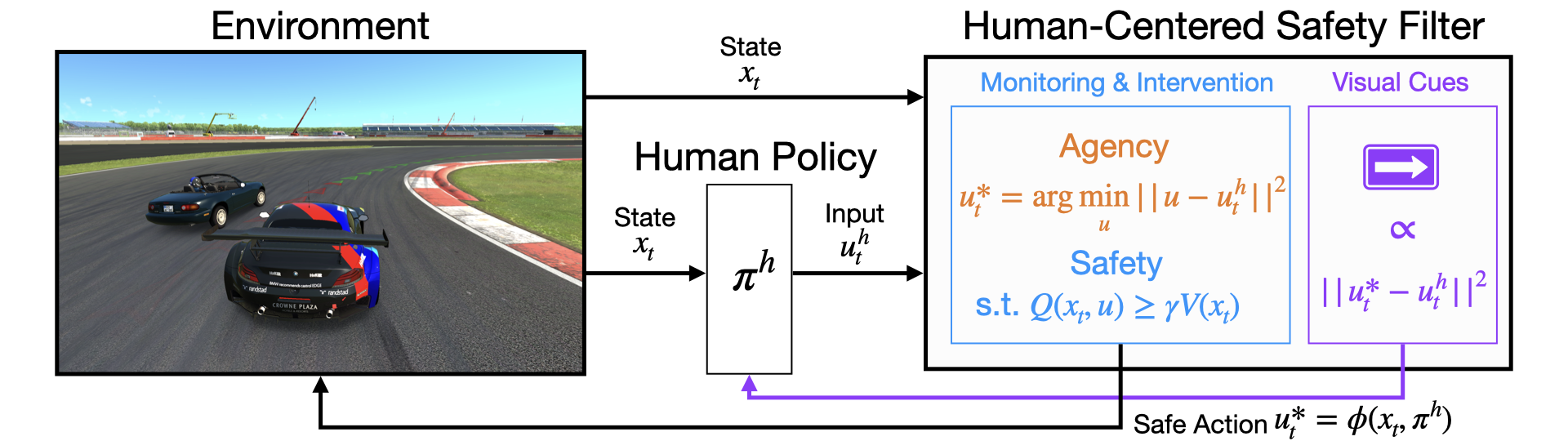

We propose a human-centered safety filter (HCSF) for shared autonomy that significantly enhances system safety without compromising human agency. Our HCSF is built upon a novel state–action control barrier function (Q-CBF) safety filter, which does not require any knowledge of the system dynamics for both synthesis and runtime safety enforcement.

Contributions

We introduce, to the best of our knowledge, the first fully model‑free control barrier function (CBF) safety filter. We learn a neural safety value function through interactions with a black‑box system and, at deployment, enforce a safety constraint based on a novel Q-CBF without any knowledge of system dynamics (e.g., control affine model). Both the synthesis and deployment of our Q‑CBF are scalable to high‑dimensional systems and do not require any knowledge of their dynamics.

We build upon the learned Q‑CBF and demonstrate our HCSF in Assetto Corsa (AC), a high‑fidelity racing simulator with black‑box dynamics, where the filter is pushed to the limit against all potential failure modes by real human drivers with diverse skill levels. To the best of our knowledge, this is the first time a safety filter has been synthesized, deployed, and evaluated in such a high‑dimensional, dynamic shared autonomy setting involving human operators.

We conduct an extensive in‑person user study with 83 human participants and conclude—with statistical significance in both trajectory data and human driver responses—that our HCSF considerably improves safety and user satisfaction without compromising human agency or comfort relative to having no safety filter. Furthermore, when compared to a conventional safety filter, our HCSF offers significant gains in human agency, comfort, and overall satisfaction while maintaining at least the same level of robustness—if not exceeding it.

Approach

Our HCSF builds upon a novel Q-CBF safety filter, which is, to the best of our knowledge, the first fully model-free CBF safety filter. We first prove that the safety value function is a valid discrete-time CBF. Then, we scalably learn a state–action safety value function through black-box interations with the system via model-free RL-based Hamilton-Jacobi (HJ) reachability analysis. At runtime, we leverage the learned state–action safety value function to enforce a novel Q-CBF safety constraint, which does not require any knowledge of the system dynamics for both safety monitoring and intervention. Therefore, our Q‑CBF safety filter is fully model‑free—from synthesis through deployment—unlocking a wide range of applications for complex, black‑box systems.

During deployment, our HCSF solves an optimal control problem (OCP) at each timestep to compute a safe action that minimally deviates from the human's intended action while satisfying the Q-CBF safety constraint. Thus, our HCSF actively promotes human agency while ensuring the system remains within the maximal safe set. Whenever an intervention occurs, our HCSF provides visual cues that reflect the intervention magnitude at each input channel, fostering transparent collaboration with the human operator.

Results

Our HCSF significantly improves safety compared to unassisted driving.

2. Our HCSF preserves human agency significantly better than a least-restrictive safety filter.

3. Our HCSF significantly improves smoothness of interventions compared to a least-restrictive safety filter.

Citation

@inproceedings{oh2025safety,

title={{Safety with Agency: Human-Centered Safety Filter with Application to AI-Assisted Motorsports}},

author={Oh, Donggeon David and Lidard, Justin and Hu, Haimin and Sinhmar, Himani and Lazarski, Elle and Gopinath, Deepak and Sumner, Emily S

and DeCastro, Jonathan A and Rosman, Guy and Leonard, Naomi Ehrich and Fisac, Jaime Fern{\'a}ndez},

booktitle = {Proceedings of Robotics: Science and Systems (RSS)},

doi={10.48550/arXiv.2504.11717},

year={2025},

}

Authors

*D. D. Oh and J. Lidard contributed equally.

Elle Lazarski